Anthropic is introducing new rules for those using its Claude chatbot. By the end of September, individuals will need to choose whether their conversations can be used for training the company’s future models. This marks a departure from its earlier practice, where consumer data was kept only for short periods and never included in model development.

Longer Data Retention

The company had previously deleted most consumer chats within a month unless legal or policy requirements meant they had to be stored longer. Inputs flagged for violations could be held for two years. Under the new policy, those who do not change their settings will see conversations retained for up to five years. The decision affects Claude Free, Pro, Max, and Claude Code accounts. Customers using enterprise, government, education, or API services are not included.

Competitive Pressure in AI

Model developers depend on large volumes of authentic conversation data. Rival firms such as OpenAI and Google are following similar paths, and Anthropic is now moving in the same direction. By collecting more material from everyday exchanges and coding tasks, the company strengthens its ability to refine its systems.

Consent by Design

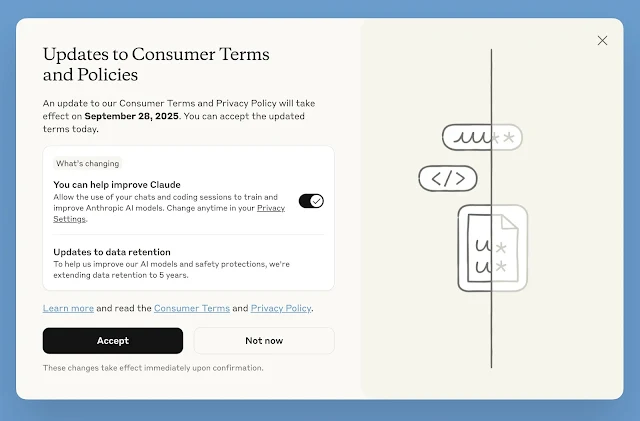

The process for gathering consent has raised concerns. New signups select their choice during registration. Existing users, however, are shown a notice with a large acceptance button and a smaller toggle for training permissions underneath, which is already set to “on.” This design has been described by some analysts as one that encourages agreement rather than careful review.

Broader Industry Context

The shift reflects an unsettled period for data policies across the sector. OpenAI is under a court order requiring it to keep all ChatGPT conversations indefinitely, including deleted ones, as part of an ongoing legal case. Only enterprise contracts with zero data retention remain exempt. Such changes highlight how little control many individuals now have over their data once it enters these platforms.

User Awareness

Privacy specialists warn that the complexity of these terms makes genuine consent difficult. Settings that appear straightforward, such as delete functions, may not behave as users expect. With policies changing rapidly and notices often buried among other company updates, many people remain unaware of what agreements they have accepted or how long their information stays stored.

Notes: This post was edited/created using GenAI tools.

Read next: Meta’s Threads Experiments With Long Posts, Taking Aim at X’s Extended Articles